Caltech’s LEONARDO Is a Bipedal Robot That Can Skateboard and Slackline

from hackster.io

The traditional portrait has existed for thousands of years in various forms, including sculptures, paintings, and with the advent of cameras, photos.

Yet the format has remained largely unchanged as people simply snap a photo and possibly get it recreated physically at a later date or just share it digitally. Inspired by other automatons such as Patrick Tresset's Paul the Robot, Felix Fisgus and Joris Wegner teamed up to make their own drawing robot called the Pankraz Piktograph that could be used at fairs and trade shows to provide an innovative take on the regular selfie box/photo booth concept. It was also intended to showcase ideas in STEAM subjects and demonstrate the team's technical know-how.

Drawing machines can operate in a myriad of different ways, including a generic X/Y gantry, pulleys that tug and release the central drawing tool on two sides, or an arm that is driven by a pair of motors that actuate its joints to position the drawing head.

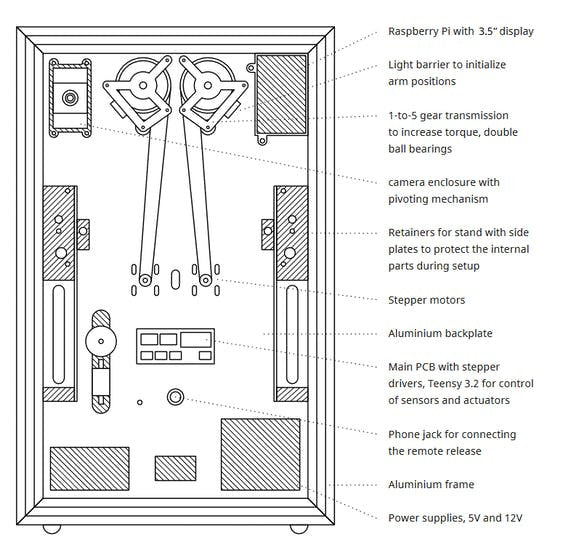

This last method was chosen due to the clean aesthetic of the pair of arms and the elegance of a floating pen plotter. Their solution has two motors at the base of the machine's platform that can rotate and move the five attached linkages either closer/further away and side-to-side for a full range of motion. The team noted in their paper that the system strongly resembles a pair of human arms holding a pen, thus further contributing to a more organic design.

Within the enclosure near the top sits a Raspberry Pi Camera Module which is set in a rotating housing that lets users adjust it to their heights. It can pivot in five-degree increments and has a spring that keeps the camera in the desired position.

Just like the cameras of old, the Pankraz Piktograph features a single-button remote that gets connected to the system. When the button is pressed, a digital photo is captured and displayed on a 3.5" LCD screen. If the user likes the resulting photo, another press will begin the drawing process.

However, sketching an image that contains thousands of individual pixels one-by-one would take a very long time and is not conducive to how a pen is normally used. So before anything happens, the administrator of the device can configure the machine to draw a photo in one of many styles.

These can range from a basic outline by using quantization and a Canny filter all the way to creating a filled-in portrait with cross-hatched shading or even random lines.

Once the vector drawing is finished being computed by the Raspberry Pi 3, the machine then needs to output it onto a piece of paper.

As mentioned previously, the arms are driven by a pair of standard NEMA17 stepper motors that are each connected to a one-to-five gear ratio transmissions that helps increase the torque. They are both controlled by a single Teensy 3.2 development board that is attached to two stepper motor drivers and a set of light sensors for initial calibration.

In order to determine how far to rotate each motor, along with the correct direction, to reach a certain point on the page, Fisgus and Wegner had to harness the power of inverse kinematics. Essentially, by knowing the distances of each arm segment, the spacing between the motors, and a few angles, the position of the pen can be calculated.

This process must happen in reverse to go from a known position to the amount of necessary rotation, hence the "inverse" in "inverse kinematics".

As seen in the team's demonstration video, their Pankraz Piktograph project is great an quickly drawing portraits in a wide variety of styles. Below is a series of images that showcase everything from simple hatching to polygons and even a classic old "printer look".

You can read about this project in more detail here on Fisgus' website.

Leave a comment